A collection of open source and IBM foundation models are available for inferencing in IBM watsonx.ai. Find foundation models that best suit the needs of your generative AI application and your budget.

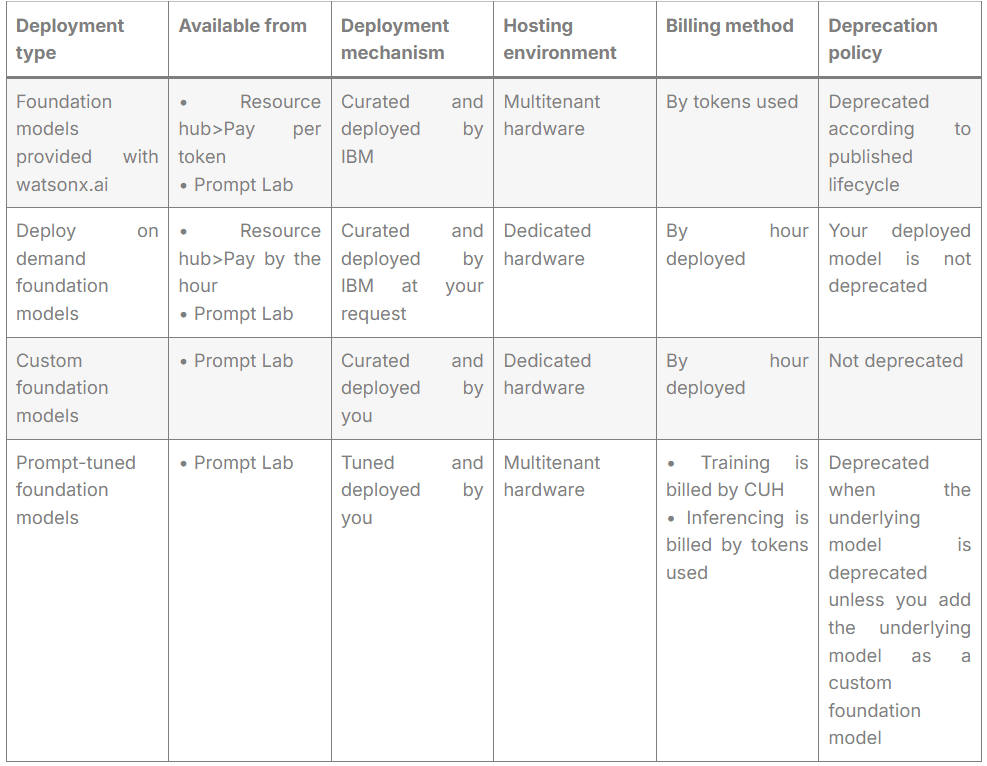

The foundation models that are available for inferencing from watsonx.ai are hosted in various ways: Foundation models provided with watsonx.ai IBM-curated foundation models that are deployed on multitenant hardware by IBM and are available for inferencing. You pay by tokens used. See Foundation models provided with watsonx.ai. Deploy on demand foundation models An instance of an IBM-curated foundation model that you deploy and that is dedicated for your inferencing use. Only colleagues who are granted access to the deployment can inference the foundation model. A dedicated deployment means faster and more responsive interactions without rate limits. You pay for hosting the foundation model by the hour. See Deploy on demand foundation models. Custom foundation models Foundation models curated by you that you import and deploy in watsonx.ai. The instance of the custom foundation model that you deploy is dedicated for your use. A dedicated deployment means faster and more responsive interactions. You pay for hosting the foundation model by the hour. See Custom foundation models. Prompt-tuned foundation models A subset of the available foundation models that can be customized for your needs by prompt tuning the model from the API or Tuning Studio. A prompt-tuned foundation model relies on the underlying IBM-deployed foundation model. You pay for the resources that you consume to tune the model. After the model is tuned, you pay by tokens used to inference the model. See Prompt-tuned foundation models.

If you want to deploy foundation models in your own data center, you can purchase watsonx.ai software. For more information, see Overview of IBM watsonx as a Service and IBM watsonx. governance software.

Deployment methods comparison

To help you choose the right deployment method, review the comparison table.

What are variables in research?

For details on how model pricing is calculated and monitored, see Billing details for generative AI assets.

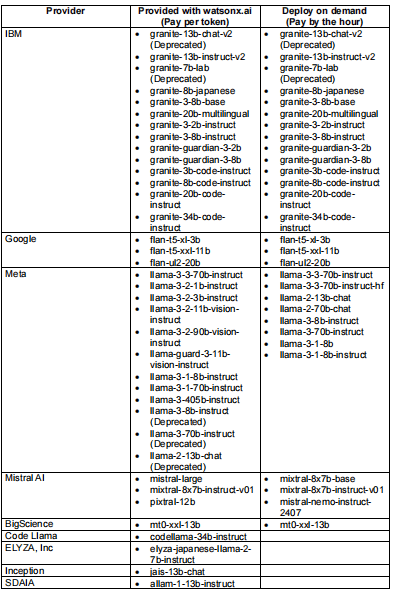

Supported foundation models by deployment method

Various foundation models are available from watsonx.ai that you can either use immediately or that you can deploy on dedicated hardware for use by your organization.

Foundation models provided with watsonx.ai

A collection of open source and IBM foundation models are deployed in IBM watsonx.ai. You can prompt these foundation models in the Prompt Lab or programmatically.

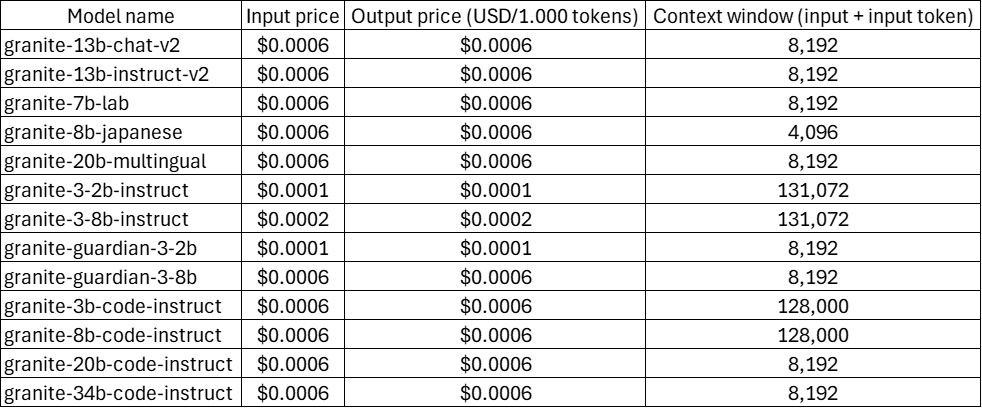

IBM foundation models provided with watsonx.ai

The following table lists the supported IBM foundation models that IBM provides for inferencing.

Use is measured in Resource Units (RU); each unit is equal to 1,000 tokens from the input and output of foundation model inferencing. For details on how model pricing is calculated and monitored, see Billing details for generative AI assets.

Some IBM foundation models are also available from third-party repositories, such as Hugging Face. IBM foundation models that you obtain from a third-party repository are not indemnified by IBM. Only IBM foundation models that you access from watsonx.ai are indemnified by IBM. For more information about contractual protections related to IBM indemnification, see the IBM Client Relationship Agreement and IBM watsonx.ai service description.

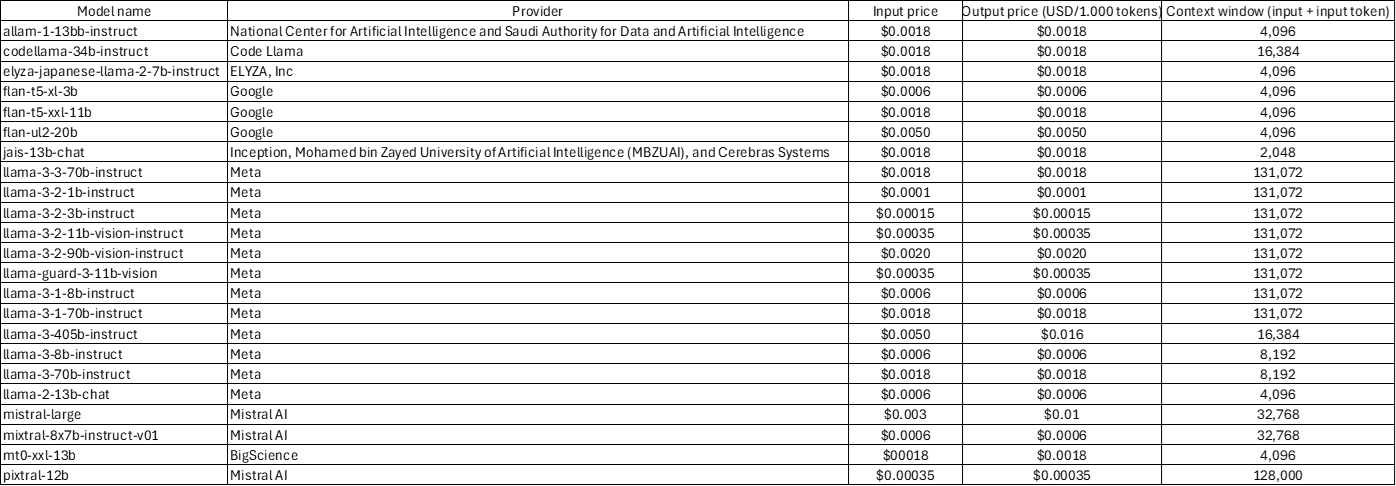

Third-party foundation models provided with watsonx.ai

The following table lists the supported third-party foundation models that are provided with watsonx.ai.

Use is measured in Resource Units (RU); each unit is equal to 1,000 tokens from the input and output of foundation model inferencing. For details on how model pricing is calculated and monitored, see Billing details for generative AI assets.

- For more information about the supported foundation models that IBM provides for embedding and reranking text, see Supported encoder foundation models.

- For a list of which models are provided in each regional data center, see Regional availability of foundation model.

- For information about pricing and rate limiting, see watsonx.ai Runtime plans.

Custom foundation models

In addition to working with foundation models that are curated by IBM, you can upload and deploy your own foundation models. After the custom models are deployed and registered with watsonx.ai, you can create prompts that inference the custom models from the Prompt Lab and from the watsonx.ai API.

To learn more about how to upload, register, and deploy a custom foundation model, see Deploying a custom foundation model.

Deploy on demand foundation models

Choose a foundation model from a set of IBM-curated models to deploy for the exclusive use of your organization.

For more information about how to deploy a foundation model on demand, see Deploying foundation models on-demand.

Note: Foundation models that you can deploy on demand are available only in the Dallas data center.

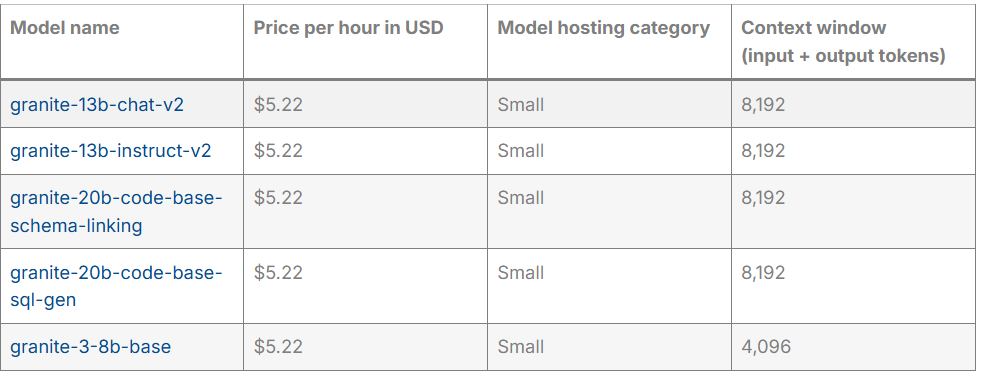

Deploy on demand foundation models from IBM

The following table lists the IBM foundation models that are available for you to deploy on demand.

Some IBM foundation models are also available from third-party repositories, such as Hugging Face. IBM foundation models that you obtain from a third-party repository are not indemnified by IBM. Only IBM foundation models that you access from watsonx.ai are indemnified by IBM. For more information about contractual protections related to IBM indemnification, see the IBM Client Relationship Agreement and IBM watsonx.ai service description.

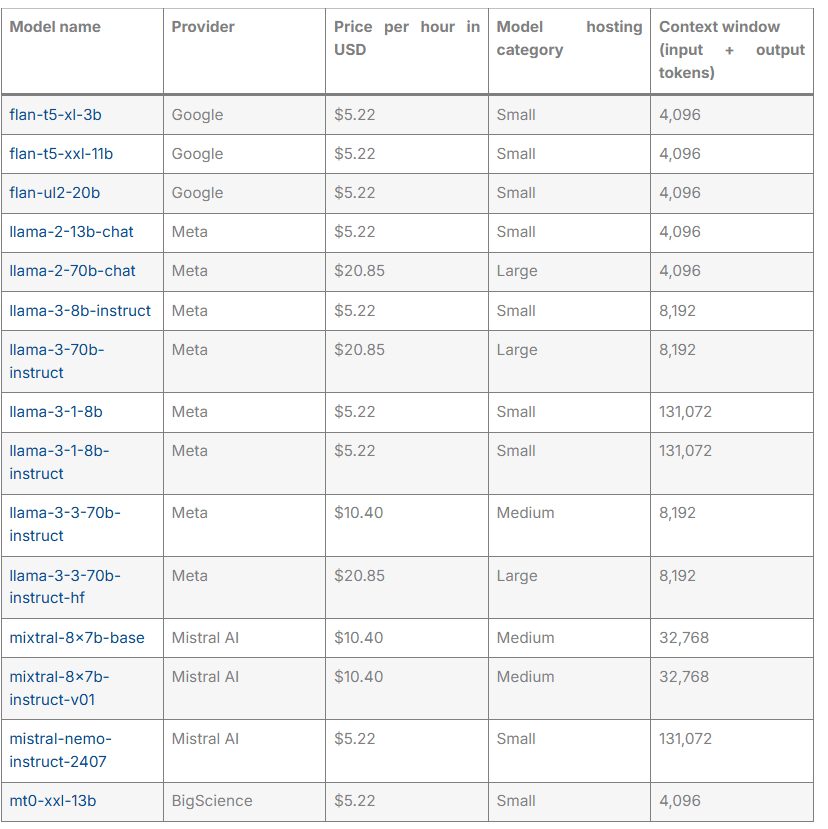

Deploy on demand foundation models from third-parties

The following table lists the third-party foundation models that are available for you to deploy on demand.

Prompt-tuned foundation models

You can customize the following foundation models by prompt tuning them in watsonx.ai:

- flan-t5-xl-3b

- granite-13b-instruct-v2

For more information, see Tuning Studio.

Related Industries

Recommended for you

What is Wayground and How Can It Be Used for Teaching? What’s New?

New analog and embedded processing technologies from TI enable automakers to deliver smarter, safer and more connected driving experiences across their entire vehicle fleet

What is Wayground?

New analog and embedded processing technologies from TI enable automakers to deliver smarter, safer and more connected driving experiences across their entire vehicle fleet

You’re Closer to Agentic AI Than You Think

New analog and embedded processing technologies from TI enable automakers to deliver smarter, safer and more connected driving experiences across their entire vehicle fleet